When it comes to big data analytics, there’s still a lot of hype surrounding the field. Most mid to large enterprises these days are all advised to set up various protocols to collect as much customer data as possible for analytics. Further, there’s also a bit of scaremongering going on about the negative consequences of not engaging in this activity.

But this creates an interesting scenario where companies are sitting on highly valuable customer data without really knowing how to use it. Sure it’s a complicated activity to pick out useful insights from petabytes, but it’s not impossible.

I think the main problem at the moment is that a lot of managers have believed in the myth that big data would automatically solve business issues and enable them to compete effectively. When you believe in this lie, you set yourself up to make some common mistakes.

But this isn’t the only big data problems afflicting businesses today, there are many other variables that equally contribute to creating a big mess. Let’s explore these common mistakes further.

1. Blindly Collecting Data

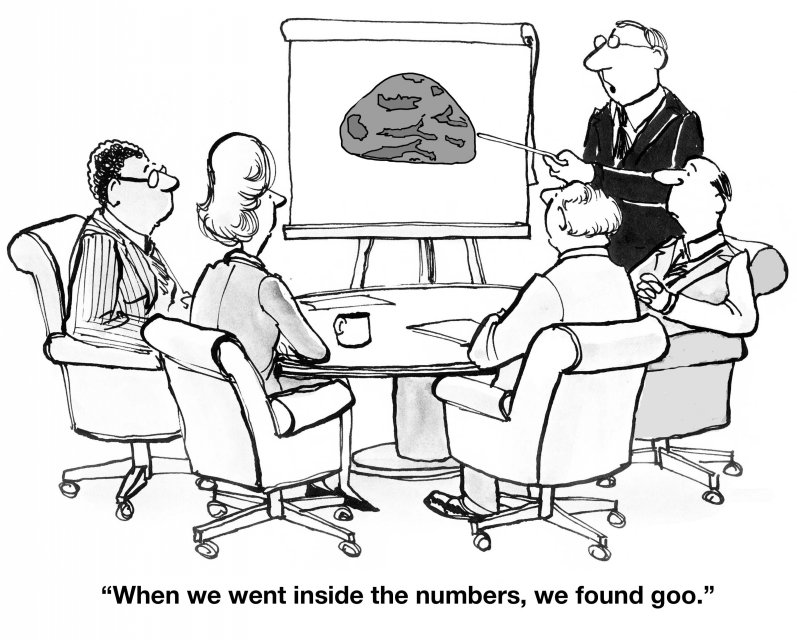

Indiscriminately collecting data just leads to a treasure trove of data. Companies rush into big data without an effective strategy and end up with loads and loads of data that they can’t make sense of.

This data is usually quite comprehensive, varied, extremely large, and often with fast-changing data sets. This raw data usually doesn’t provide any value to business users who have no idea how to process it.

2. Being Unaware of the Limitations of Unstructured Data

Managers sometimes don’t realize the limits of unstructured data and are often overwhelmed when trying to figure out how to extract some value from this data. But we are making some progress here as in recent times, steps have been taken to develop context and techniques to engage in text-based data mining.

This method can lead to generating insights similar to those gained through structured data. But a lot of data isn’t text-based as you also have other sources where data is collected like video.

To get passed this hurdle, companies need to first utilize it in the beginning to augment the accuracy and speed of the existing data analytics operation.

As a rule of thumb, it’s better to avoid trying to generate a hypothesis from unstructured data until there are seasoned experts in place who are able to do that for you.

3. Using More Data than Needed

Having loads and loads of data doesn’t mean you should use all of it. It’s the GOOD DATA that really provides value. If you can access the right data, adding more data to it doesn’t really make it any better.

In fact, it can complicate your analysis and make it difficult to interpret. Although it can seem wasteful not to use all the data that you’ve collected, doing so can undermine what you're trying to accomplish.

At the same time, managers also make the mistake of encouraging the rehashing and reframing of old data for the sake of presentations. This is a terrible idea as you can over complicate and cloud the decision-making process. Further, you also run the risk of misinterpretation which can have grave consequences.

4. Completely Misunderstanding Integration

The main hurdle faced by businesses is compatibility and integration. Big data is made up of data that’s collected from multiple sources, so it’s not naturally congruent or easy to integrate.

This makes the whole process of compatibility and integration quite complicated and expensive. As a result, performing simple tasks like cross-referencing different types of data can be difficult.

A solution to this issue is storing data in an unstructured format in data lakes, but this again doesn’t solve the problem with integration as it’s still extremely difficult to accomplish.

5. Turning Big Data into an IT Project

Some managers think that categorizing and “cleaning” collected data will make the analysis structured and normal. As a result, it becomes a project for the IT department to manage with no guarantee that the data will be validated and actually usable.

It’s hard to make a case to engage in this activity as the data collected might not be able to answer important business questions.

The only way to avoid this is to plan before collecting the data. For all elements in the process to be aligned with business goals, strategizing can’t be an afterthought.

The best way to start is by asking a strategic business question and then collecting the data to answer it. Moving forward in this manner can enable you to visualize the data and deliver actionable intelligence.

6. Thinking Error Free Means Correct

Not getting any error messages when running your analytics code doesn’t mean that the query arrived at the right answer. This is usually due to employees without a background in measurement taking on these tasks as a result of a shortage of skills and experience.

Error messages only identify syntax errors in coding and not the query that is being measured. So no matter what you do with bad data, you will arrive with bad data in the end (regardless of how you rearranged and processed it).

Finding the right match of skills and experience to perform the analytics is crucial to efficiently engaged in big data analytics. Further, it’s also important to note that data is industry specific, so what someone was successful at doing within another industry may not directly translate into what you’re doing now.

7. Believing Those Correlations Are Always Valuable

It’s difficult to establish causal relationships within large pools of data as massive sets of data usually exhibit very similar and sometimes even identical observations. This, in turn, can lead to false correlations that lead managers to make bad decisions.

Data is not objective, so you have to find ways of extracting meaningful actionable insights from it. The same rules apply to machine learning algorithms.

The challenge here is to develop the right strategies to efficiently make the right correlations with the data that’s available.

8. Assume that Statistical Significance Provides Value

Keeping with the same line of thought, there’s always a challenge in defining the statistical results to be true and not the result of a sampling error. In an academic setting, your results have to be statistically significant to be published.

But in this setting, statistical significance can provide zero value because although it can show that there’s an effect, the size of the effect may not be enough to justify changing the way you do business.

So it’s a dangerous gray area as the more data you have, the more likely you’re to show statistical significance which is meaningless.

In conclusion, I believe the running theme here that is central to all the mistakes made by managers is the fact that highly skilled and experienced data analytics professionals are scarce.

As a result of this industry-wide shortage, it may be a while before we stop seeing these common mistakes repeating themselves.